The instructions above do it in a non-destructive way.

You can also overwrite the folder if you have no changes you want to keep with git checkout examples in this case, or do a reset - but you have to do it after you disabled the filters, and then remember to re-enable them back. Of course git stash pop if you made some local changes and you want them back. To check what library the packages are linked against use:

Currently the main implementations are OpenBLAS, MKL, ATLAS. Ideally all the modules that you use ( scipy too) should be linked against the same BLAS implementation. If you use numpy and pytorch that are linked against different Basic Linear Algebra Subprograms (BLAS) libraries you may experience segfaults if the two libraries conflict with each other.

Install ipython in pytorch install#

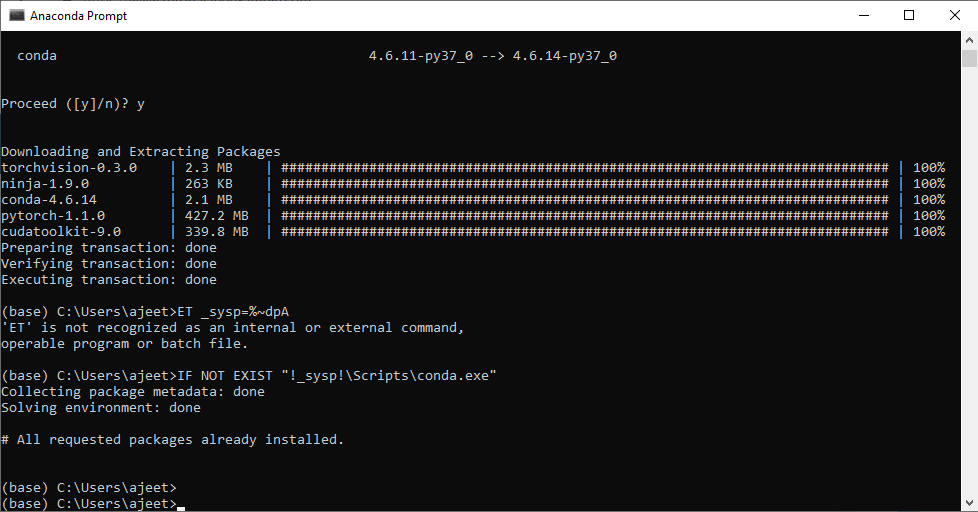

Of course, you can install other packages into that environment, but keep track of what you install so that you could revert them in the future if such conflict arises again. If you do that, you will not have any such conflicts in the future. In general it is the best to create a new dedicated conda environment for fastai and not install anything there, other than fastai and its requirements, and keep it that way. It will usually then tell you if it needs to downgrade/upgrade/remove some other packages, that prevent it from installing normally. The following are just the guidelines, you will need to find your platform-specific commands to do it right.

Install ipython in pytorch drivers#

If that’s the case, wipe any remnants of NVIDIA drivers on your system, and install one NVIDIA driver of your choice. If you have nvidia-smi working and pytorch still can’t recognize your NVIDIA CPU, most likely your system has more than one version of NVIDIA driver installed (e.g. The only thing you to need to ensure is that you have a correctly configured NVIDIA driver, which usually you can test by running: nvidia-smi in your console. For older drivers you will probably need to install pytorch with cuda90 or ever earlier. However, note, that you most likely will need 396.xx+ driver for pytorch built with cuda92. So we refer to the project and its packages as pytorch, but inside python we use it as torch.įirst, starting with pytorch-1.0.x it doesn’t matter which CUDA version you have installed on your system, always try first to install the latest pytorch - it has all the required libraries built into the package.

Which means that pytorch can’t find the NVIDIA drivers that match the currently active kernel modules. Despite having nvidia-smi working just fine.